Battle of the 4 Best Kubernetes Cloud Providers: GKE, AKS, EKS, and DOKS

Kubernetes is the de-facto container management system for creating, deploying, and operating containerized applications. It offers reliability and scalability and, therefore, is the foundation for modern cloud-native apps. Kubernetes is an open-source platform that you can install, run, and maintain in on-premises data centers. In addition, you can use managed Kubernetes solutions from public cloud providers.

The four major Kubernetes providers are:

- Google Kubernetes Engine (GKE): Closely follows the latest changes in the Kubernetes open-source project;

- Azure Kubernetes Service (AKS): Known for rich integration points to other Azure services;

- Amazon Elastic Kubernetes Service (Amazon EKS): One of the late players in the Kubernetes arena; a strong option due to AWS;

- DigitalOcean Kubernetes (DOKS): The new Kubernetes service in the market.

In this post, we’ll compare these four options when it comes to cluster configuration and set up, features and automations, scaling management, and pricing.

Cluster Configuration and Setup

Cluster configuration and setup can be time consuming and tiresome, requiring a deep understanding of the cloud environment, including networking and resource management. There are two fundamental ways to create Kubernetes clusters in cloud providers: the web portal or CLI tools. While using the web portal is more intuitive and user friendly, CLI can offer more automation. You can read further on building a Kubernetes environment and scaling for production in our blog article.

Creating a Kubernetes Cluster Using the Web Portal

First, let’s create a Kubernetes cluster using the web portal in GKE, AKS, Amazon EKS, and DOK.

1. GKE

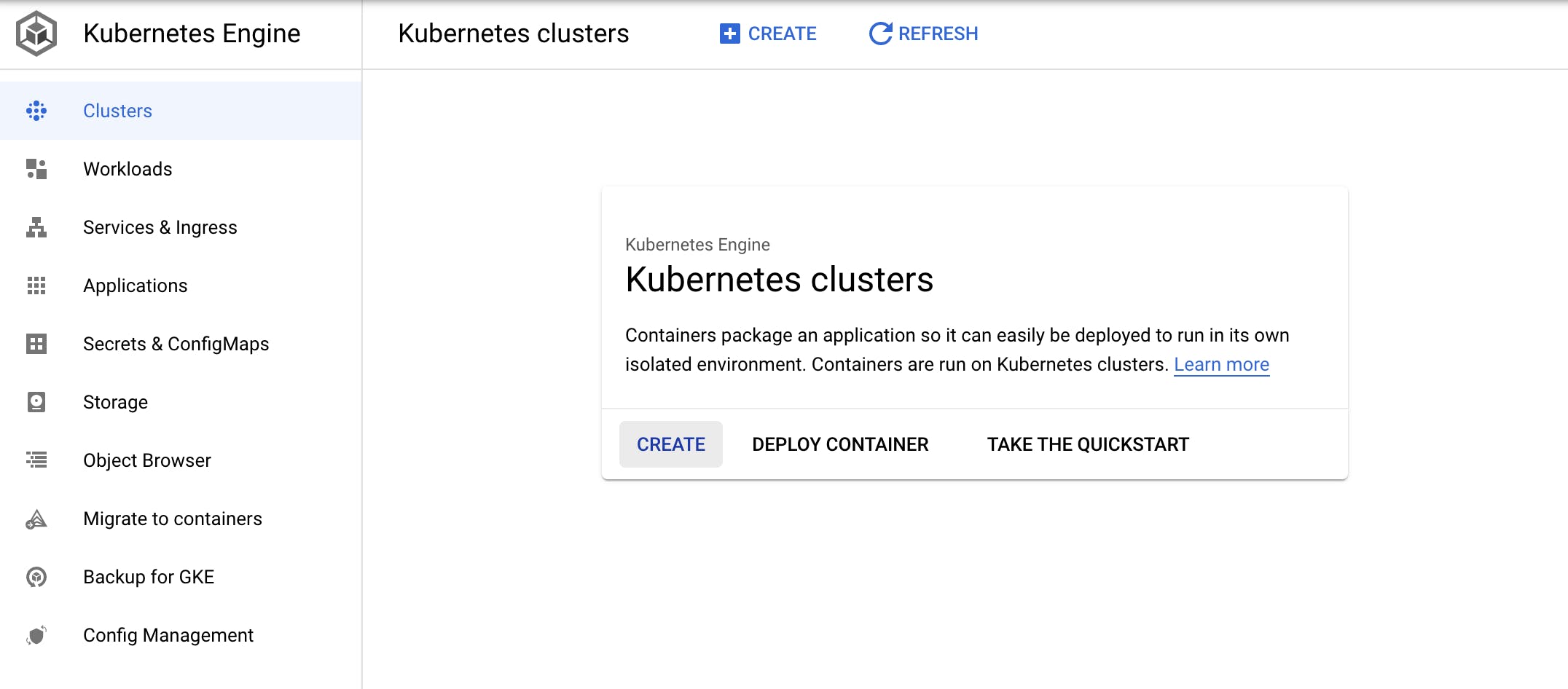

To create a Kubernetes cluster using the web portal in GKE, first select “Compute - Kubernetes Engine” in the main menu and then click “CREATE.”

Figure 1: GKE dashboard

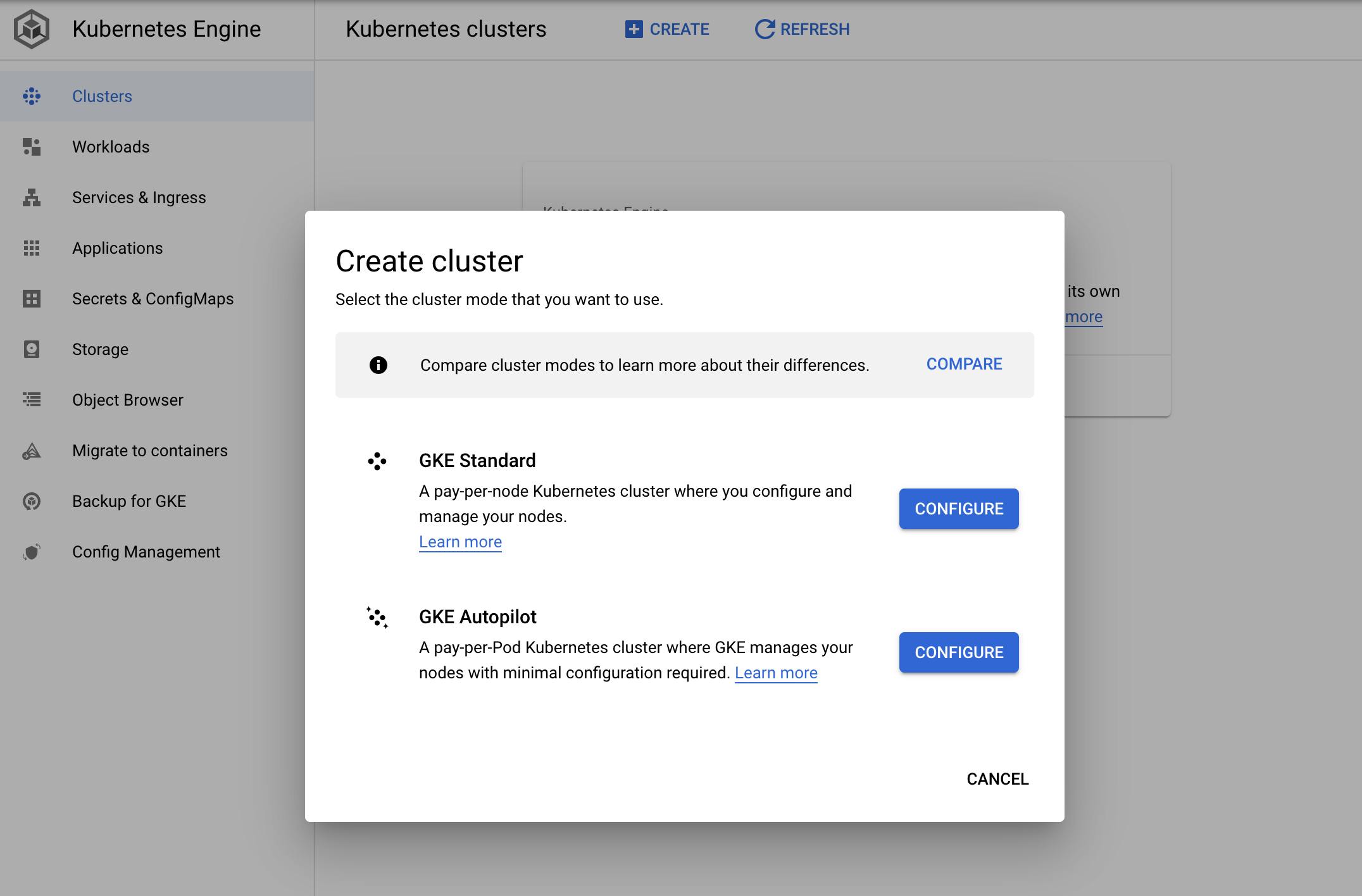

In the pop-up, select a cluster mode:

Figure 2: GKE cluster modes

There are currently two modes of GKE clusters: Autopilot and Standard. In Autopilot mode, GKE manages scaling with a streamlined configuration, and you pay for the pods running in your cluster. In Standard mode, you need to handle scaling, configure the nodes, and pay for the nodes connected to your Kubernetes cluster.

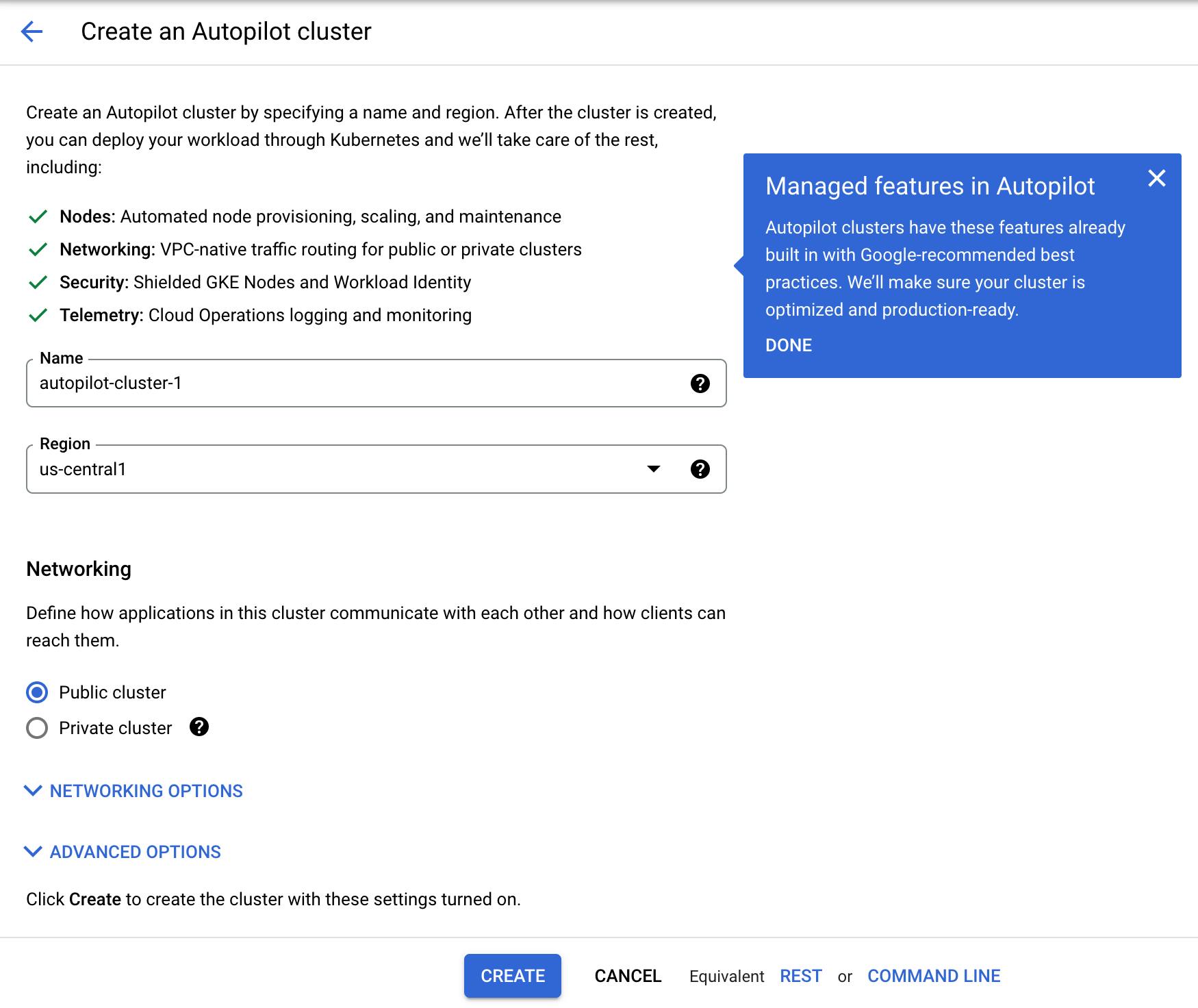

In Autopilot mode, you do not need to fill in a lot of information, as most preferences are preselected:

Figure 3: GKE Autopilot mode configuration

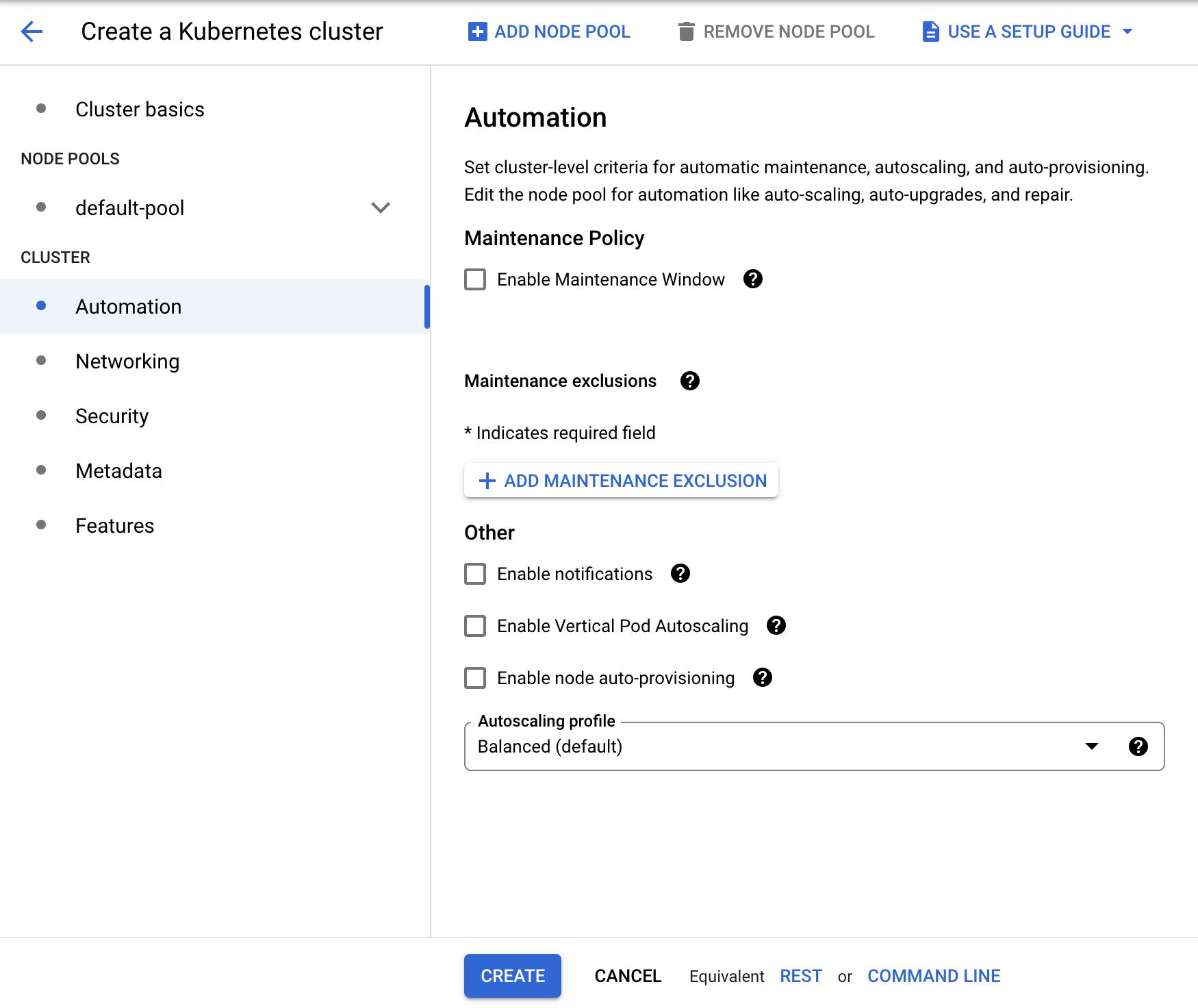

When you select Standard mode, you will be able to configure automation, networking, security, metadata, and features:

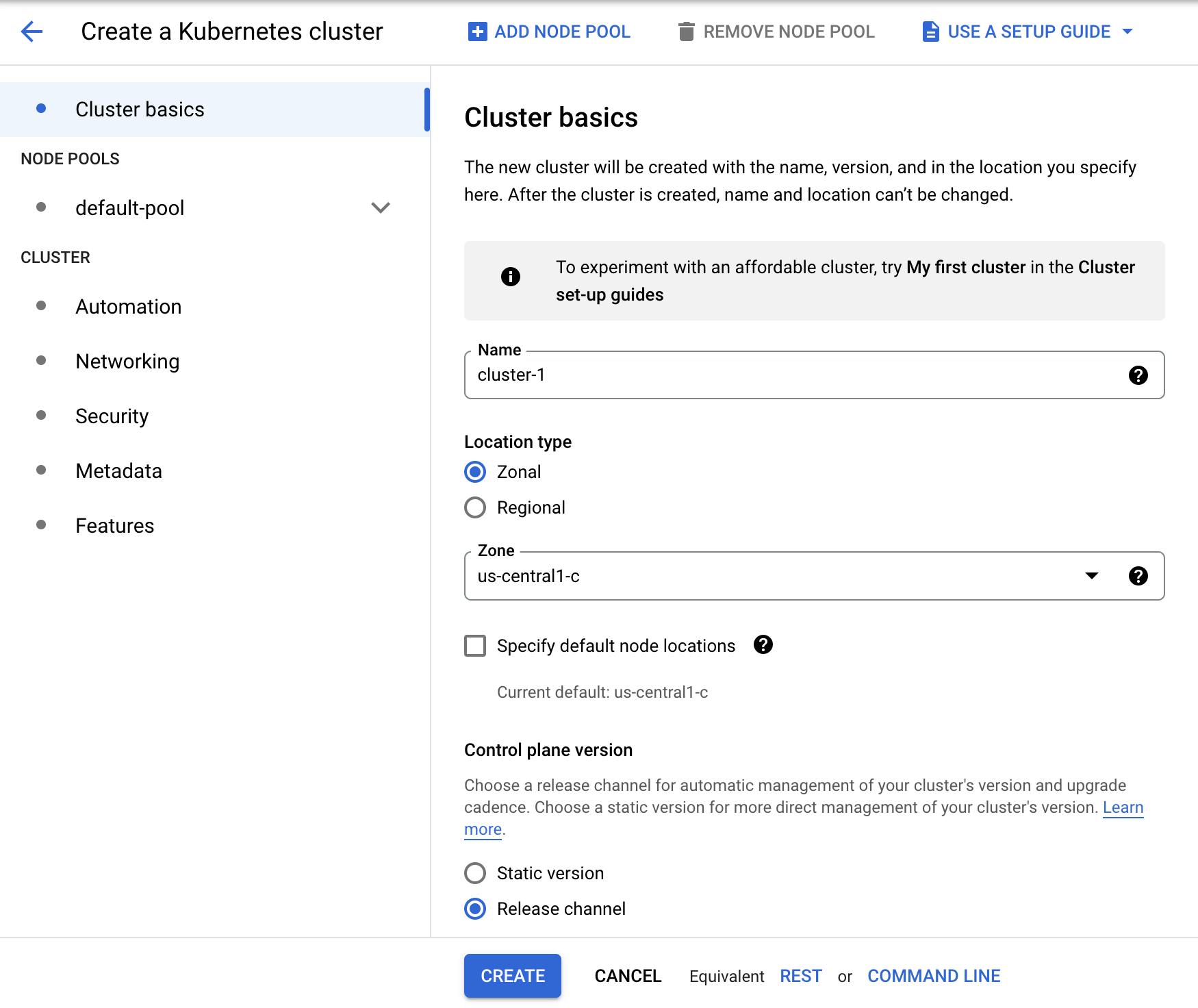

Figure 4: GKE Standard mode configuration

These configuration pages already have default values, so you can just fill in the cluster basics and click “CREATE.” In a couple of minutes, your cluster will be ready.

2. AKS

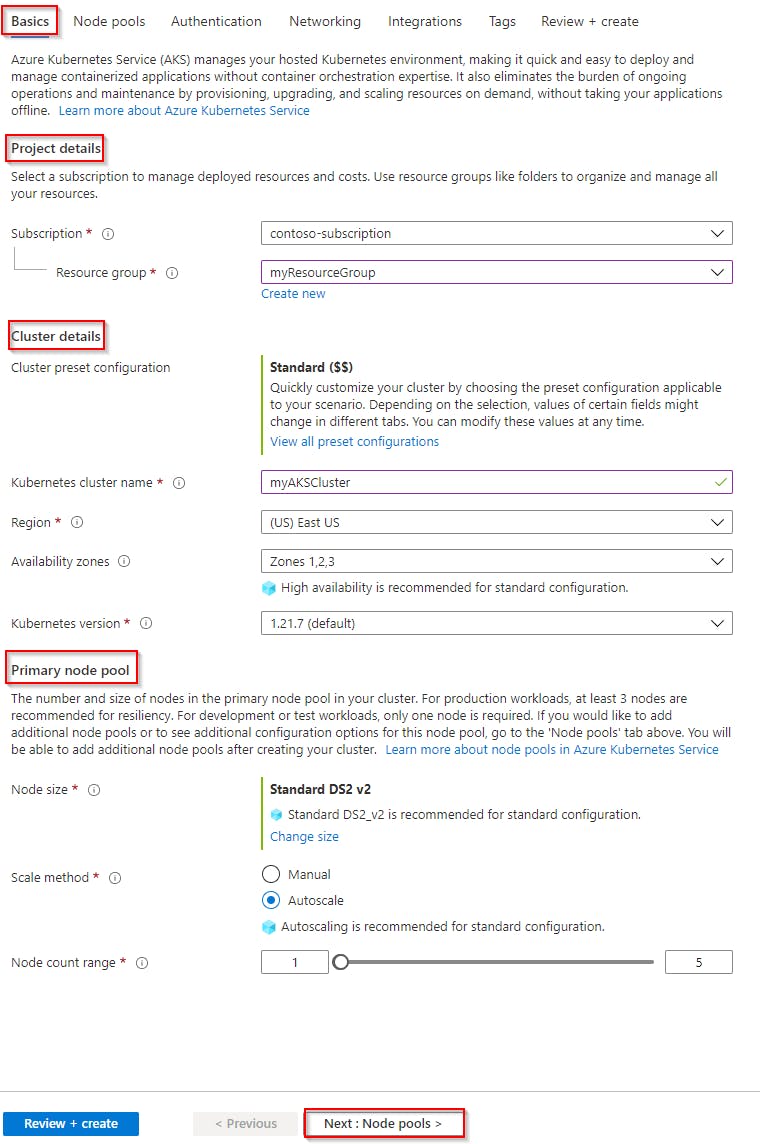

In AKS, you need to select “Containers” and then “Kubernetes Service” to get started. There are steps for basics, node pools, authentication, networking, integrations, and tags. Fortunately, you can use the default values in most of these steps.

On the “Basics” page, you will see project details, cluster details, and the primary node pool, as follows:

Figure 5: Create cluster basics in AKS (Source: Azure Docs)

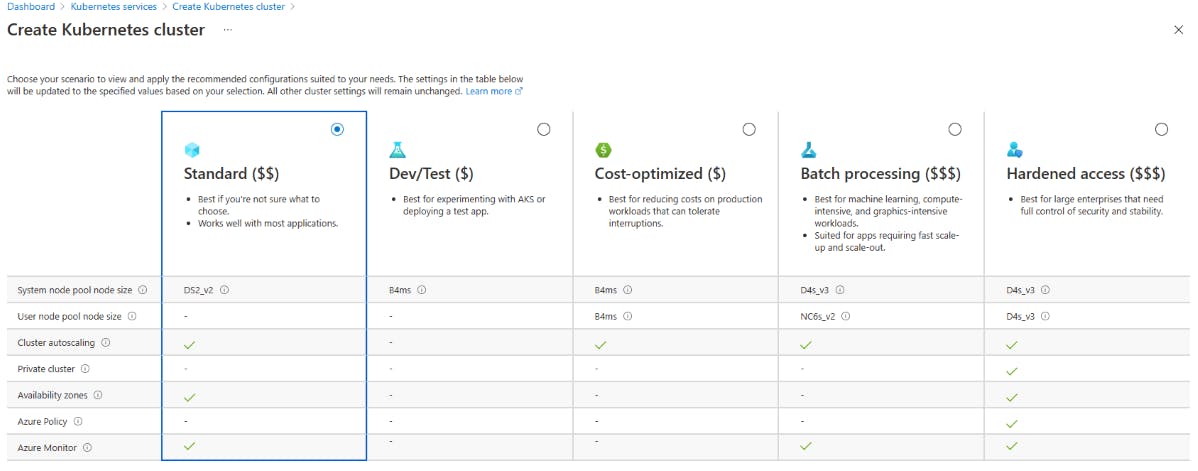

You can also use preset configurations, which are helpful for particular use cases, such as dev/test environments, cost-optimized clusters, and high-level security:

Figure 6: Cluster preset options in AKS (Source: Azure Docs)

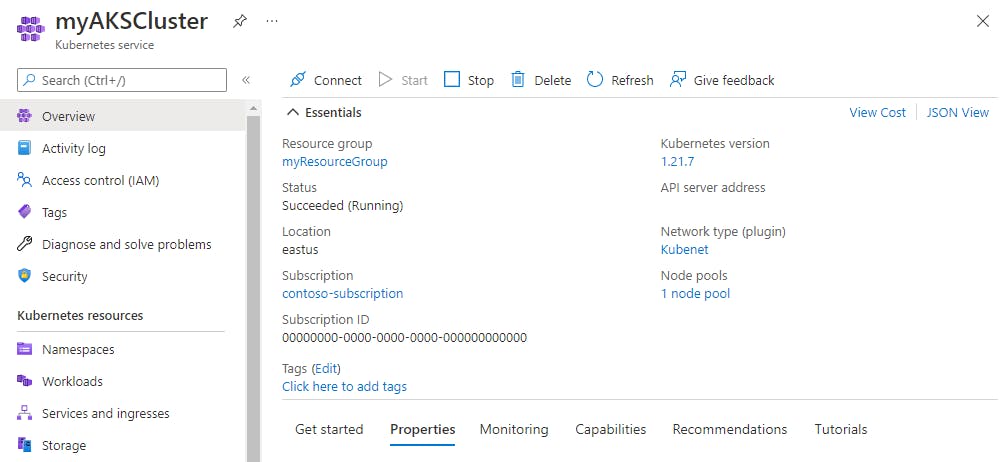

When you finish selecting your configurations, click the “Review + create” button. Your cluster will be ready in a couple of minutes:

Figure 7: AKS dashboard (Source: Azure Docs)

3. Amazon EKS

In order to create a Kubernetes cluster in Amazon EKS, you first need to fulfill the prerequisites: a VPC, a dedicated security group, and an IAM role for the cluster.

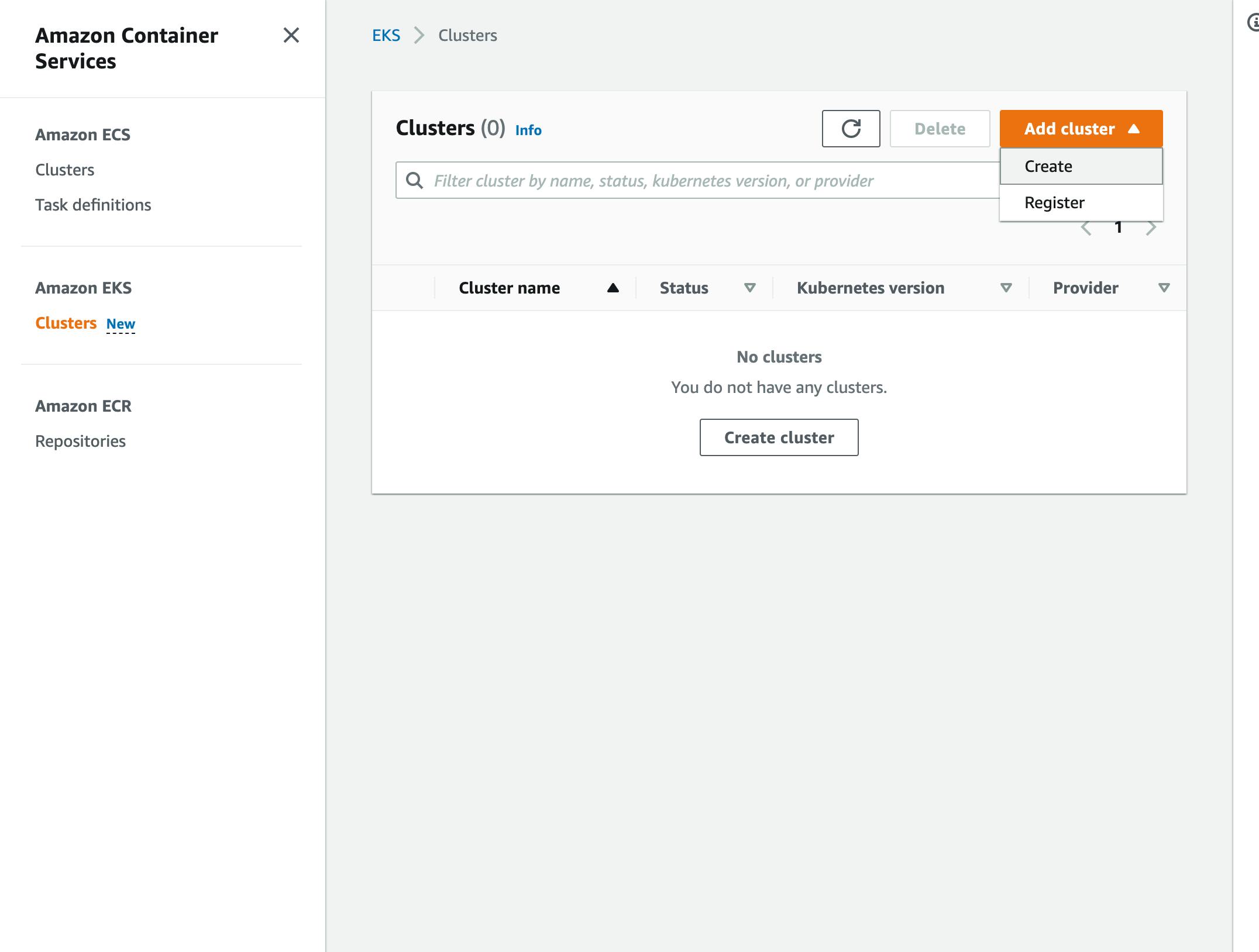

Then, go to the Amazon EKS service portal and select "Add cluster - Create:"

Figure 8: Amazon EKS Dashboard

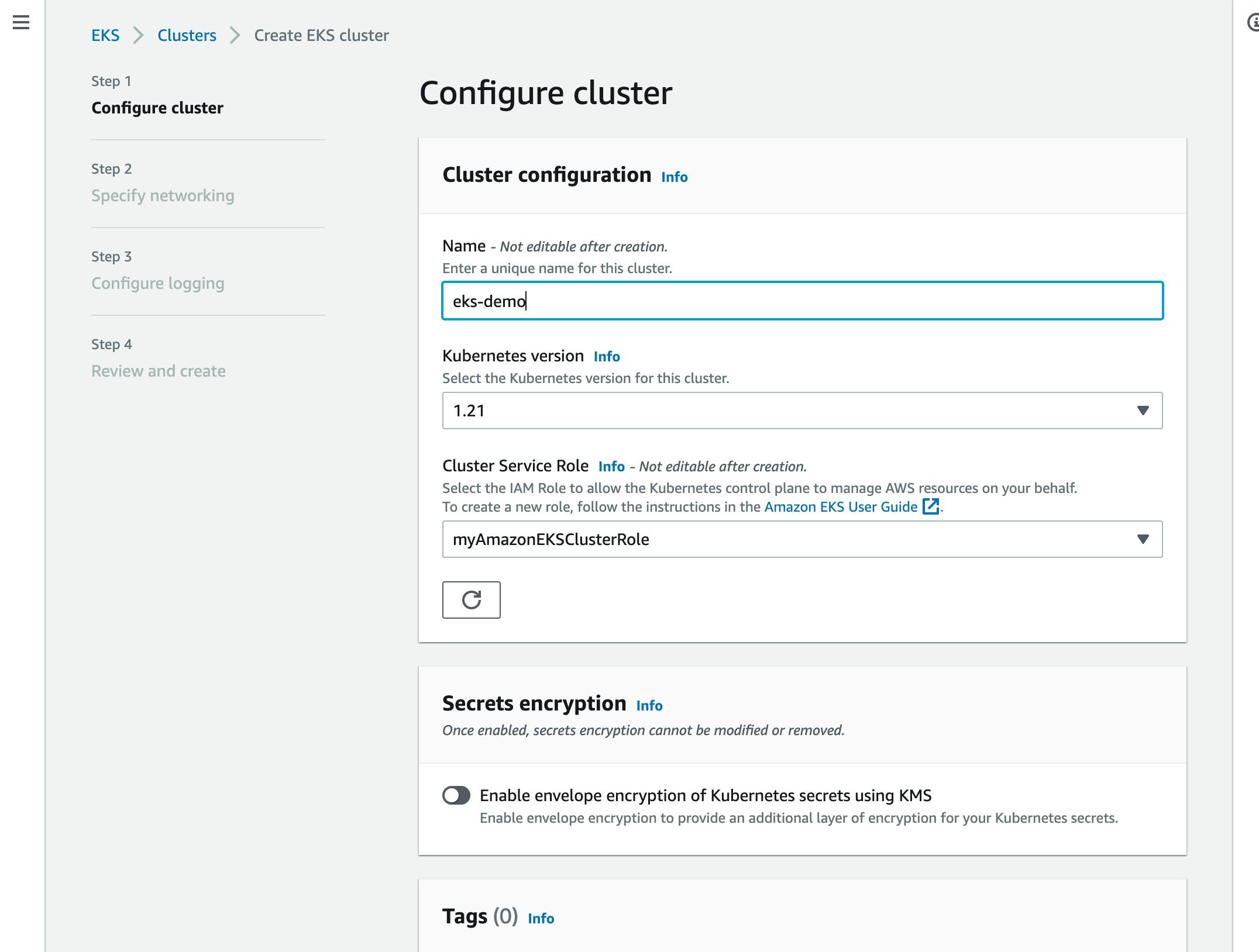

Next, provide the name, cluster version, and cluster service role, which you created as a prerequisite:

Figure 9: Amazon EKS - Configure cluster

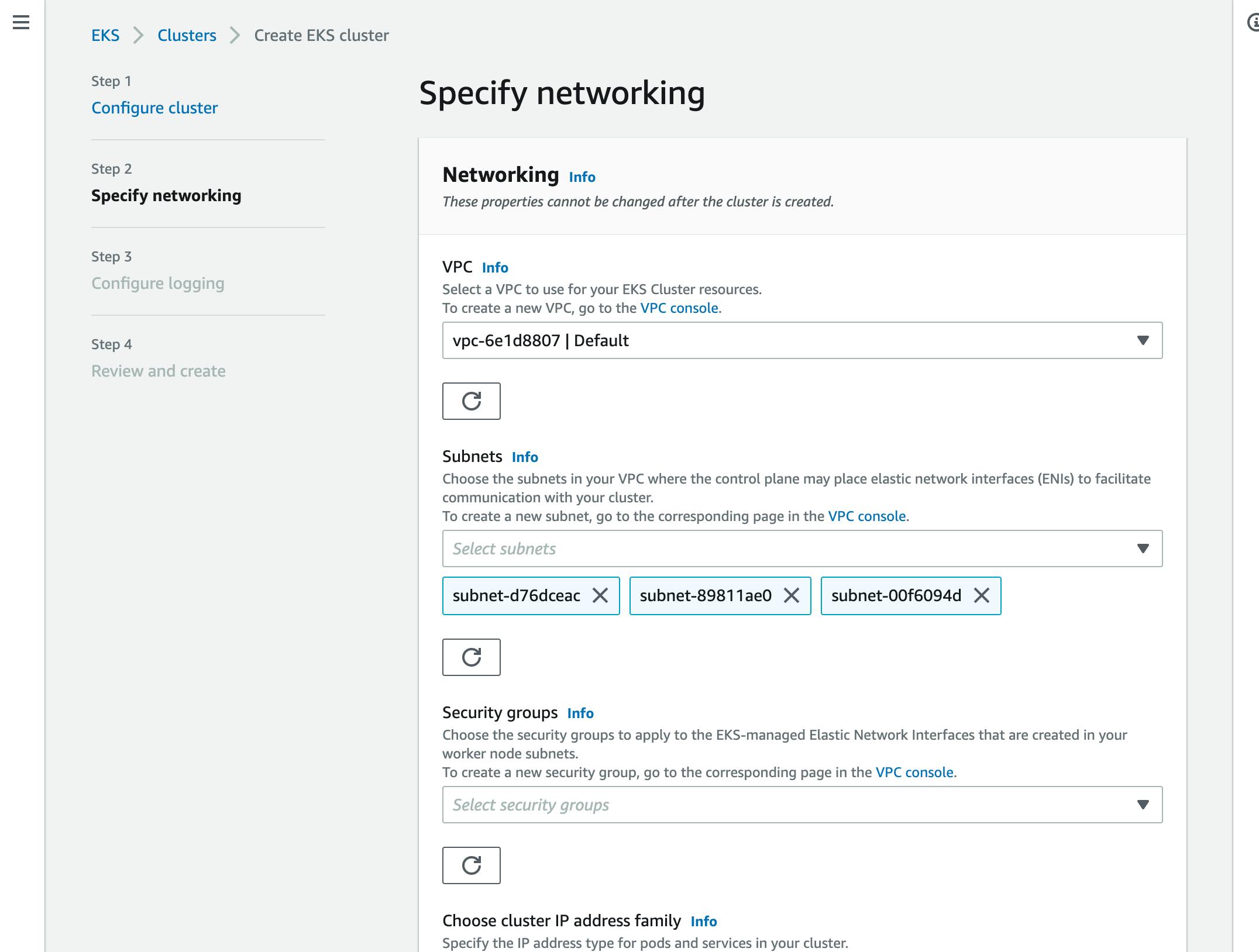

For networking, you also need to select the VPC you created for the cluster and security groups:

Figure 10: Amazon EKS - Networking

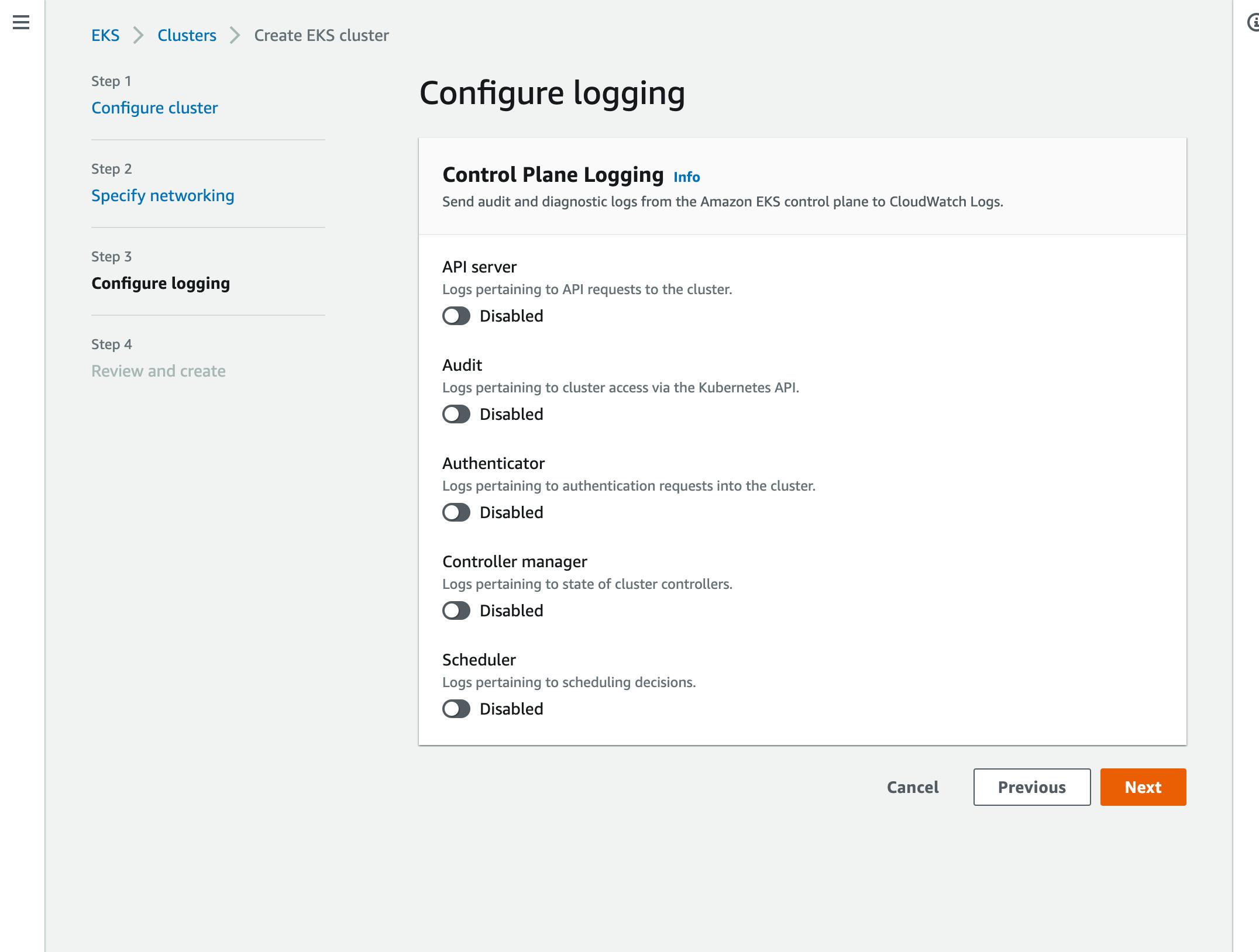

Then, you can configure logging for Kubernetes components:

Figure 11: Amazon EKS - Logging

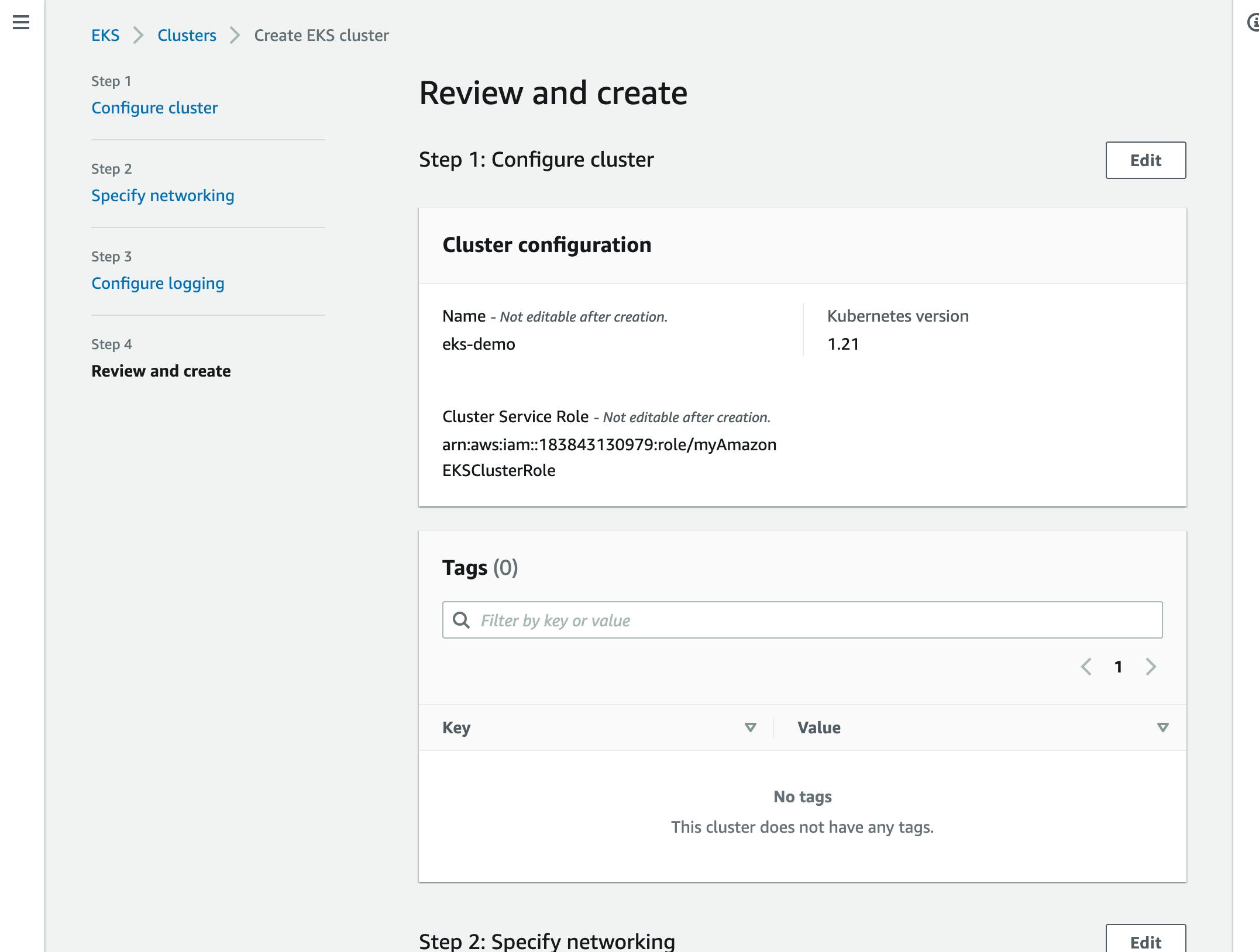

Finally, review the configuration and create your cluster:

Figure 12: Amazon EKS - Review

4. DOKS

Compared to other cloud providers, creating a Kubernetes cluster in DOKS is pretty straightforward. Simply select a Kubernetes version, region, and node pool:

Figure 13: Create cluster - DOKS (Source: DigitalOcean)

Comparison

When comparing the steps and configuration parameters of setting up a Kubernetes cluster withGKE, AKS, Amazon EKS, and DOKS, it’s clear that DOKS is the most straightforward and developer focused. GKE and AKS are not complicated either, but they require multiple steps. Luckily, both offer preset configurations, making it easy to get started. On the other hand, the cluster configuration in Amazon EKS is the most complicated, with requirements, complex steps, and parameters.

CLI Tools

All major cloud providers offer CLI tools to interact with cloud services and APIs. For developers, operating a cloud environment via a CLI is a common approach for automation and CI/CD pipelines. For Kubernetes services, CLI tools cover the complete cluster lifecycle, including creating the cluster, connection to the cluster, scaling, and managing the nodes.

1. GKE

In GKE, all Kubernetes commands are grouped under gcloud container clusters with subcommands such as create, delete, list, resize, upgrade, and get credentials.

It is also easy to get started with a default configuration cluster with the following command: gcloud container clusters create sample-cluster.

2. AKS

In AKS, you need to use the Azure CLI, called az. Commands for Kubernetes operations are grouped under az aks.

You can create a Kubernetes cluster with a default configuration as follows: az aks create -g MyResourceGroup -n MyManagedCluster.

3. Amazon EKS

In Amazon EKS, you can use one of two CLI tools to create and manage Kubernetes clusters. The first is the aws tool, similar to all other AWS operations. The second is eksctl, which was mainly developed for Kubernetes operations. Both tools are officially supported and maintained. In addition to aws tool, eksctl also handles the prerequisite installation by using Amazon EKS Getting Started CloudFormation templates.

4. DOKS

In DOKS, you can find Kubernetes management commands under doctl kubernetes (for example, doctl kubernetes cluster create to create a cluster). Similarly, you can retrieve kubeconfig with doctl kubernetes cluster kubeconfig and connect to the cluster using kubectl.

Comparison

The CLI tools that cloud providers offer reflect the style of their web portals. Therefore, the gcloud and doctl CLI tools are clean, integrated, and easy to use. The tool for AKS, namely az, requires some parameters and lacks easy-to-get-started commands. For AWS, eksctl is easy to use, but aws requires complex steps to handle Kubernetes operations.

Features and Automations

Kubernetes is a platform for deploying, scaling, and operating containerized applications in cloud environments. Therefore, it acts as a hub where cloud services can connect, create applications, check their deployment status, and run automation on the clusters. Each cloud provider offers a different set of features and automation integrations to the Kubernetes clusters running in its cloud.

1. GKE

In GKE, you can select automation for maintenance operations during the cluster creation:

Figure 14: GKE automation

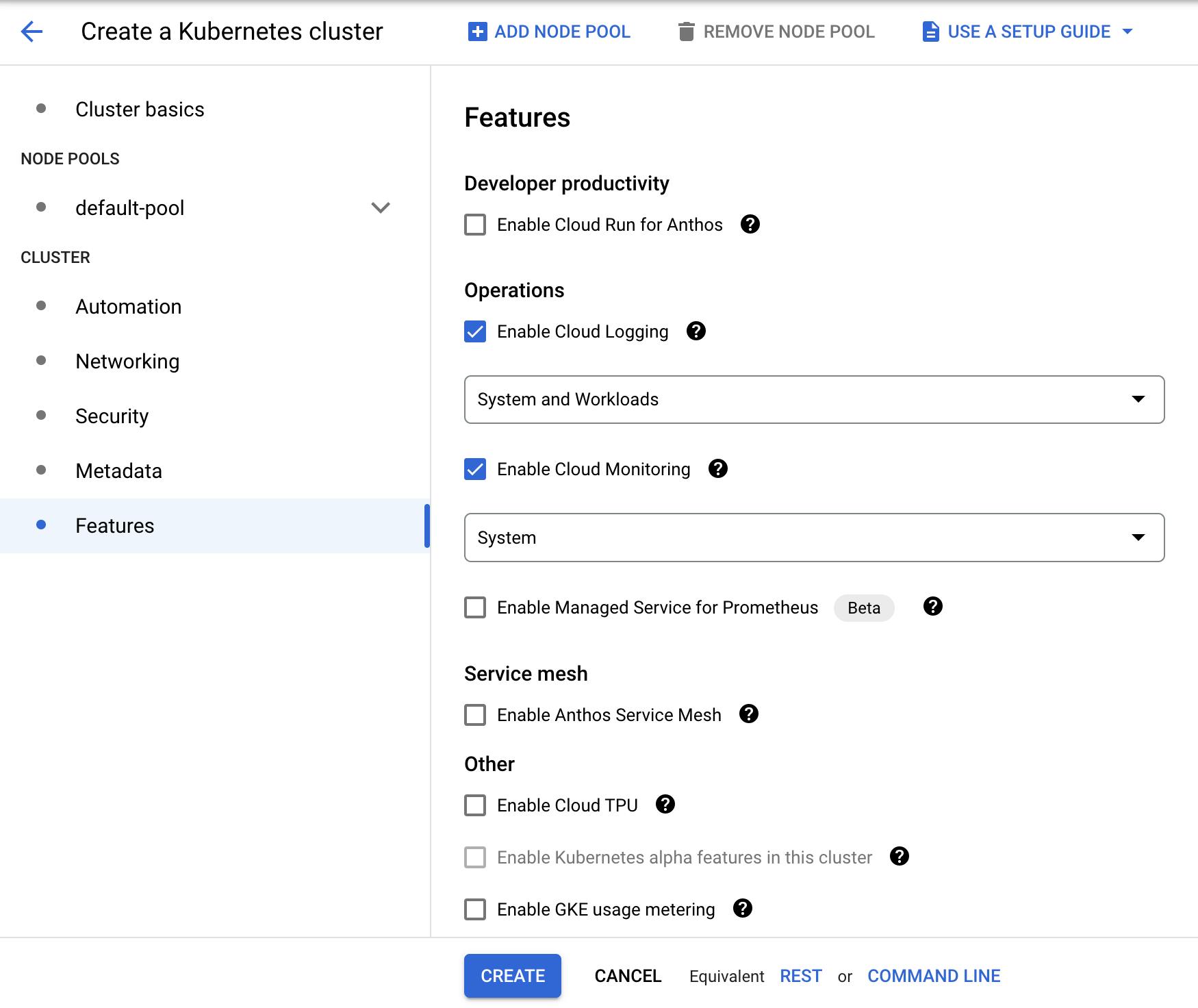

Similarly, you can select features based on cloud logging, monitoring, Prometheus, and metering:

Figure 15: GKE features

2. AKS

AKS offers a wide range of features to extend Kubernetes operations, authorization, and authentication capabilities. You can enhance your development experience using Visual Studio Code Kubernetes tools, Azure DevOps, and Azure Monitor. It is also possible to increase the security level of Kubernetes clusters using Azure Active Directory. In addition, you can implement the GitOps approach using Azure Arc-enabled Kubernetes clusters.

3. Amazon EKS

In Amazon EKS, you can configure cluster addons for the Kubernetes clusters for networking and storage. These addons do not provide integration to external systems, but extend the operations of Kubernetes, such as networking with Amazon VPC CNI, CoreDNS, kube-proxy, and storage with Amazon EBS CSI.

4. DOKS

DOKS provides a marketplace of applications, such as blogs, chats, or cluster extensions, that you can install to your Kubernetes clusters. You can also easily install monitoring stacks, Linkerd, OpenFaaS, or Grafana Loki to your Kubernetes clusters running in DOKS using the one-click applications catalog.

Scaling Management

The scalability of Kubernetes is one of its most essential features, making it the foundation for cloud-native modern applications. Kubernetes clusters are expected to scale up when resource usage increases and, similarly, scale down when the usage level decreases. Autoscaling is the automated approach to updating Kubernetes clusters. It works by adding or removing nodes to meet usage demand.

1. GKE

In GKE, the Autopilot mode handles autoscaling of the nodes, whereas the Standard mode requires manual management of the node groups.

2. AKS

In AKS, you can set up autoscaling of node pools by setting a minimum and maximum size, as shown in Figure 5. You can also make the same changes using the az tool and setting the --enable-cluster-autoscaler parameter with --min-count and --max-count.

3. Amazon EKS

EKS supports two autoscaling products: Kubernetes Cluster Autoscaler and Karpenter. Kubernetes Cluster Autoscaler works with AWS scaling groups, while Karpenter works with the Amazon EC2 fleet. The major issue here is that you need to configure and install these tools into your Kubernetes clusters yourself.

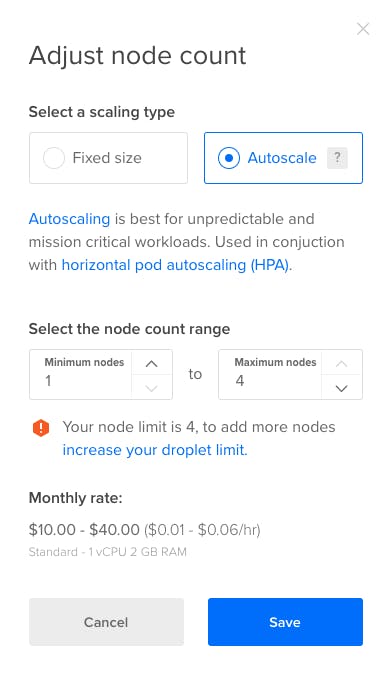

4. DOKS

DOKS offers an out-of-the-box cluster autoscaler to adjust the node pools' size automatically. You can enable autoscaling using the web portal or doctl by simply setting a minimum and maximum cluster size:

Figure 16: DOKS autoscaling (Source: DigitalOcean Docs)

Comparison

Out of our four options, GKE’s Autopilot mode provides the most advanced and easy-to-use autoscaler. Following that, the AKS and DOKS cluster autoscalers are also easy to configure and use. On the other hand, Amazon EKS does not offer autoscaling as a service, so you need to configure and manage scalability tools separately.

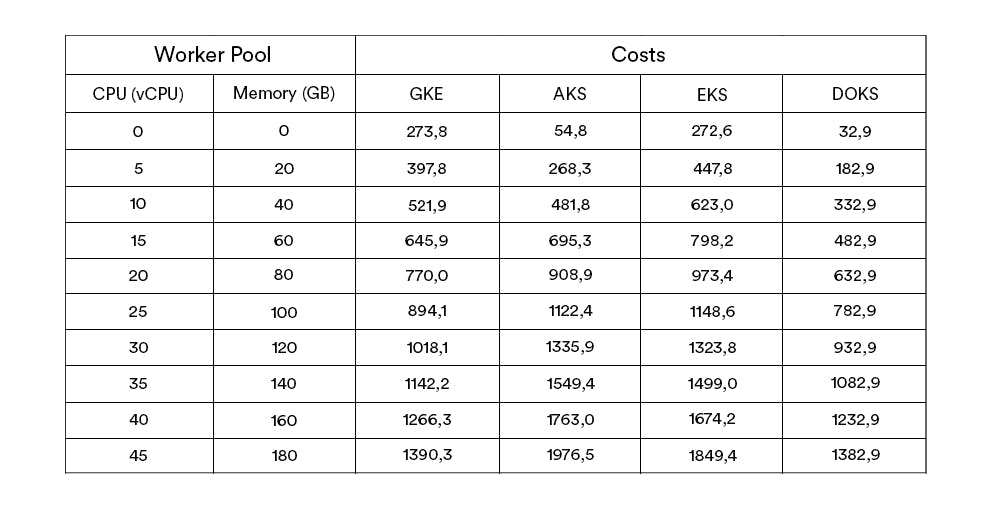

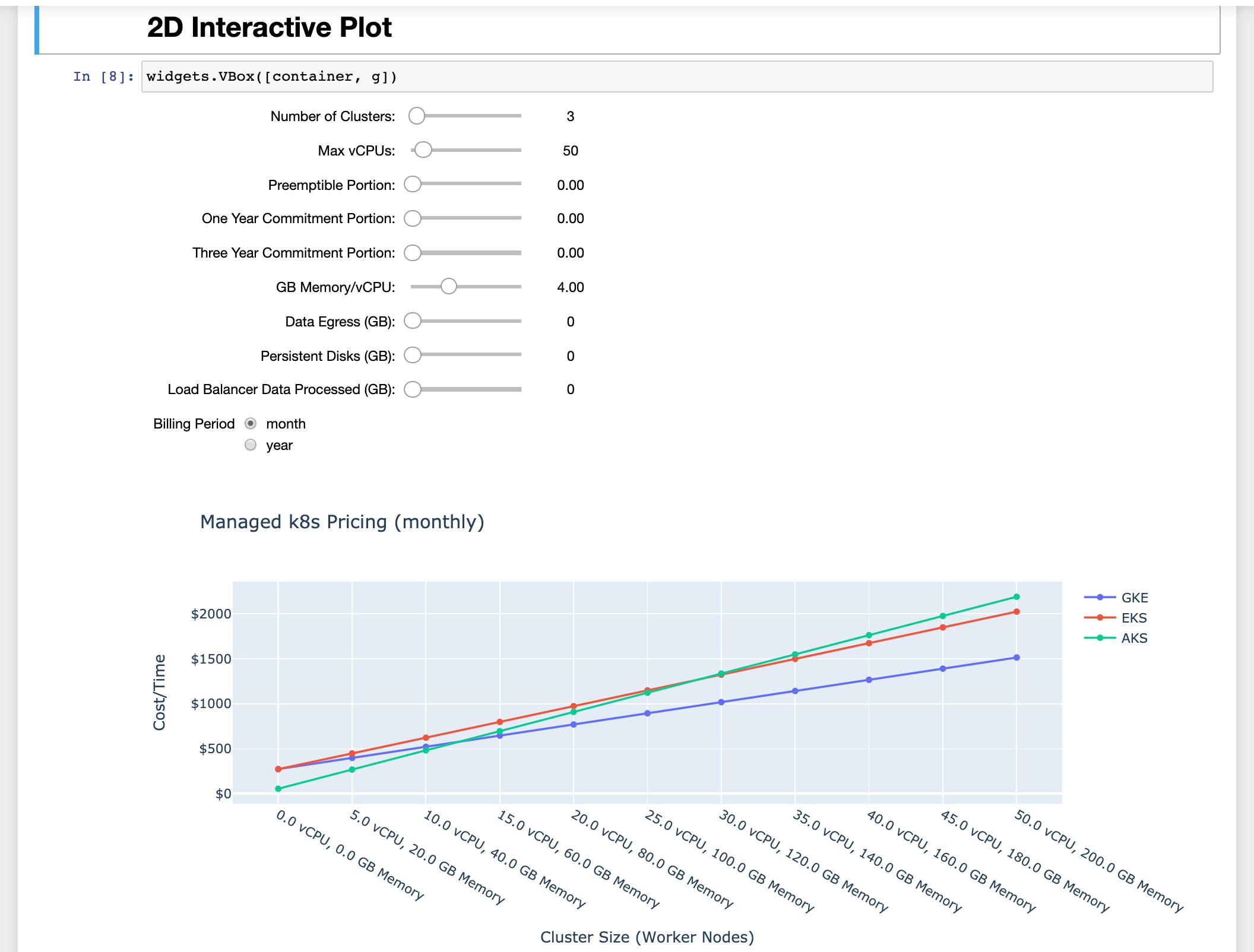

Pricing

Running a managed Kubernetes cluster comes at a cost, as Kubernetes will deploy containerized applications and use various resources, such as compute, networking, and storage. Kubernetes nodes will consume computation resources, such as vCPU and memory, and applications will consume Ingress and Egress network bandwidth. In addition, GCP and AWS charge a fixed cluster-management fee for each Kubernetes cluster.

Based on your application’s scale and resource usage, there are various workload scenarios to consider. Here is a sample calculation for increasing usage for the four cloud providers we’re discussing:

Figure 17: Managed Kubernetes cluster pricing (Source: Github)

Since AKS and DOKS do not charge a cluster-management fee, they are the cheapest options for running a large number of small clusters. On the other hand, GKE is most affordable if you are running only a few large clusters. In addition, you should consider the spot or pre-emptive instance availability and discounts when making long-term plans with your cloud provider.

Summing Up: The Best Kubernetes Cloud Provider

Every cloud provider comes with its own advantages and disadvantages when running Kubernetes clusters. GKE, AKS, Amazon EKS, and DOKS are all entirely different in terms of creating and operating the clusters, as well as managing the environments.You can read further on environment management in our blog article on What Is Environment as a Service (EaaS) and Do You Need It?. With an environment-as-a-service solution like Bunnyshell, you can easily manage your configurations and clusters, save time, and focus on developing your cloud-native application. EaaS is beneficial for any software business as it lowers the costs, increases the speed and creates new experimentation possibilities.

We can help you create and manage dev, staging, and production environments on Kubernetes. Check out our free 14-day Bunnyshell trial and enable high-velocity development now!

Ship faster starting today.

Every PR gets its own environment. Every developer ships faster. 14-day trial, no credit card.